AR glasses won’t be ready the moment that we have 5G. That doesn’t mean they aren’t coming.

By Jon Joehnig 2020-11-16

Source: https://arpost.co/2020/12/16/perceive-future-ar-glasses-edge-computing/

As soon as we have 5G coverage, we’ll have ubiquitous AR glasses, right? Some people would have you believe that. It’s easy to want to believe that. However, there’s more that needs to happen before light-weight, compact, and efficient glasses can become a reality.

Perceive doesn’t make AR glasses. They don’t make depth sensors, or controllers, or lenses either. Perceive makes chips. ARPost talked to Perceive’s VP Marketing David McIntyre, formerly of Xperi, about a non-XR approach that could drive the future of consumer wearables.

Why Isn’t 5G the Answer?

Right now, AR glasses do exist. However, almost all examples are in enterprise. Make no mistake, these glasses can do incredible things, but most of them are more like “headsets” than glasses. They’re often bulky and heavy. Some are even meant to mount onto headsets.

“Anything that weighs more than 30-40 grams, you start to notice it,” McIntyre said of headsets in long use sessions. After all, the AR glasses that many of us look forward to would be worn virtually all the time.

Some lightweight consumer models manage weight by pairing with an external processor – often a mobile phone. That’s via a tether or a wireless connection. While that’s one of the many reasons that better wireless connectivity is such a big expectation in XR, wireless connectivity won’t solve all of our problems. Right now, it brings its own problems.

“5G takes a huge amount of power. So, while 5G has speed and I doubt it has the latency, what’s the power impact?” asks McIntyre. “When you start adding processing, heat becomes a problem.”

Unless we want to incorporate “big, external cooling fans” onto glasses frames, which McIntyre admits might look pretty cool, this issue needs to be addressed.

So, now that McIntyre has splashed cold water on our dreams of the future, what is Perceive doing about it?

If 5G Isn’t the Answer, What Is?

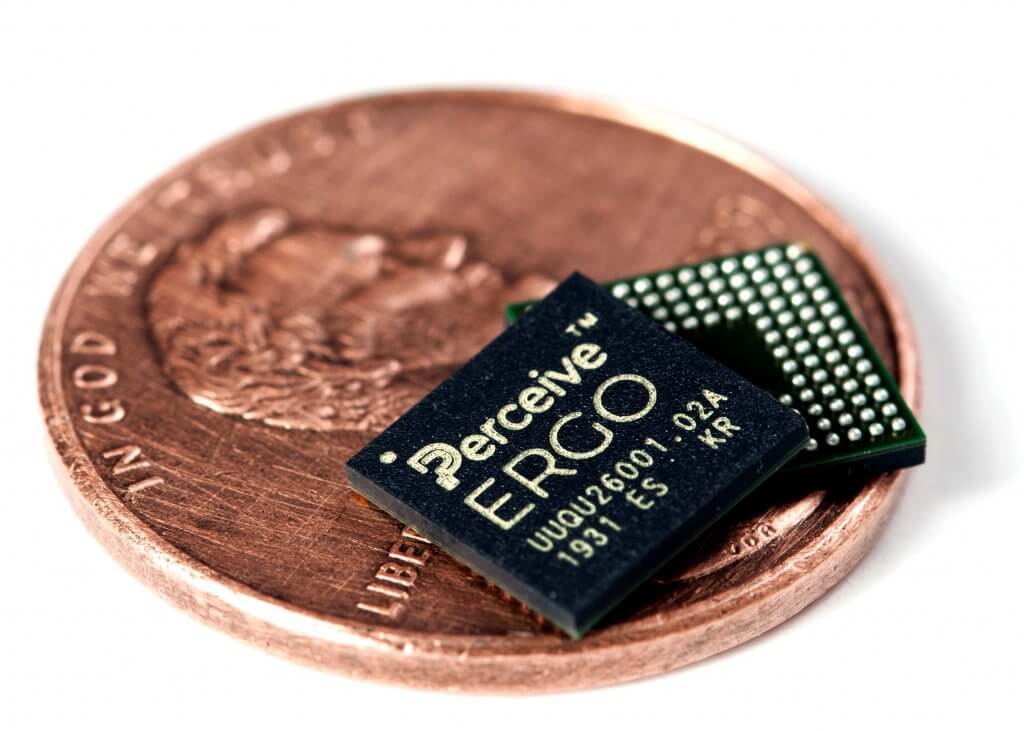

Perceive makes computer chips. Almost unimaginably small computer chips that do an almost unimaginably big job.

Their flagship product Ergo is an edge interface processor that uses a neural network to help smart devices transmit information processed on the device to be stored in the cloud.

To be clear, Ergo isn’t the first product to do this. Some next-gen gaming computers use edge computing to speed up rendering. That doesn’t mean that Ergo isn’t unique, particularly in terms of its impending impact on AR glasses.See Also: MobiledgeX Offers Early Access to Proprietary Edge Computing Research Data

Right now, smart devices work by sending everything to the cloud. This is an immensely data-intensive process that requires a great deal of processing. Further, even if the main data doesn’t seem potentially sensitive, it can be a goldmine for hackers.

McIntyre uses the example of a digital doorbell and security camera that sends info to the cloud when it senses movement. Suppose that a dog enters the camera frame. The camera would send the footage or a snapshot to the cloud. It takes a lot of energy and, while the footage of the dog might not be worth stealing, information in the background might be.

Now, take a security system with an Ergo chip. The chip uses neural networks to recognize the dog and sends that inference to the cloud rather than the complete footage.

“If you want to send inferences to the cloud, that’s less data,” explained McIntyre. “Sending ‘dog’ rather than sending a picture of a dog, that’s less valuable to attackers.”

More About Ergo

If you’re in on the world of XR processing chips, you may be wondering about Qualcomm’s XR Snapdragon. It’s true that the original XR Snapdragon chip launched in 2018 was the first of its kind and the second generation, launched about a year ago, is lauded for enabling the stand-alone headset boom that we’re in now. But the chips have two big drawbacks.

They’re big, and their power consumption is measured in watts. They work in mobile devices and headsets, but are a touch big for glasses.

Ergo is 7×7 mm, and requires only 20 mW. While running, the chip heats up to just two to three degrees above the ambient temperature. The chip uses a standard interface making it easy for hardware designers to work with. It’s already running in a number of connected devices on the market. Though, no XR devices (yet).

“We haven’t focused on AR, it’s just that what we’ve done for other markets are coming together in AR,” said McIntyre. “I’m just waiting to see the market emerge.”

While he didn’t share details, McIntyre did say that AR hardware producers, including wearables manufacturers, have shown interest in Perceive and Ergo, suggesting that it could be enabling scaled-down and beefed-up consumer AR glasses in the near future.

The Future of AR Glasses?

If all of this sounds like an admittedly non-XR person talking about the future of XR, a final analogy is in order. McIntyre likens AR glasses to a car. No one person makes a car. The expert on the engine is not the expert in the computer or the body or the seats.

When we do get AR glasses, it isn’t too much to expect that the expert in the computer will not be the expert in the displays or the frames. And I’m comfortable with Perceive being the experts in computers.