Make smarter devices with truly useful neural networks

Tiny only gets you so far. Ergo runs large neural networks at full frame rates and supports a wide variety of network architectures and types, including standard CNNs, RNNs, LSTMs, and more. Ergo is flexible and powerful enough to handle a myriad of machine learning tasks, from object classification and detection, to image segmentation and pose, to audio signal processing and language. You can even ask it to multitask – Ergo can run more than one network at a time.

Despite its processing heft, Ergo requires no external DRAM and its small, 7 mm x 7 mm package makes it well suited for use in compact devices such as cameras, laptops, or AR/VR glasses.

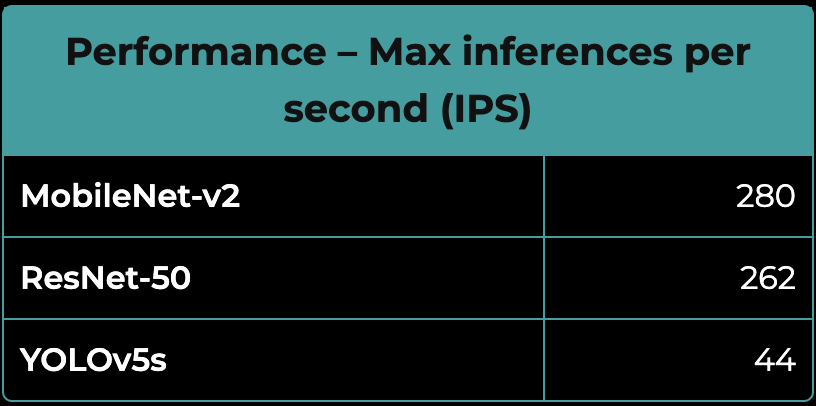

| Performance – Max inferences per second (IPS) | |

|---|---|

| MobileNet-v2 | 280 |

| ResNet-50 | 262 |

| YOLOv5s | 44 |

Keep your power (and keep your cool)

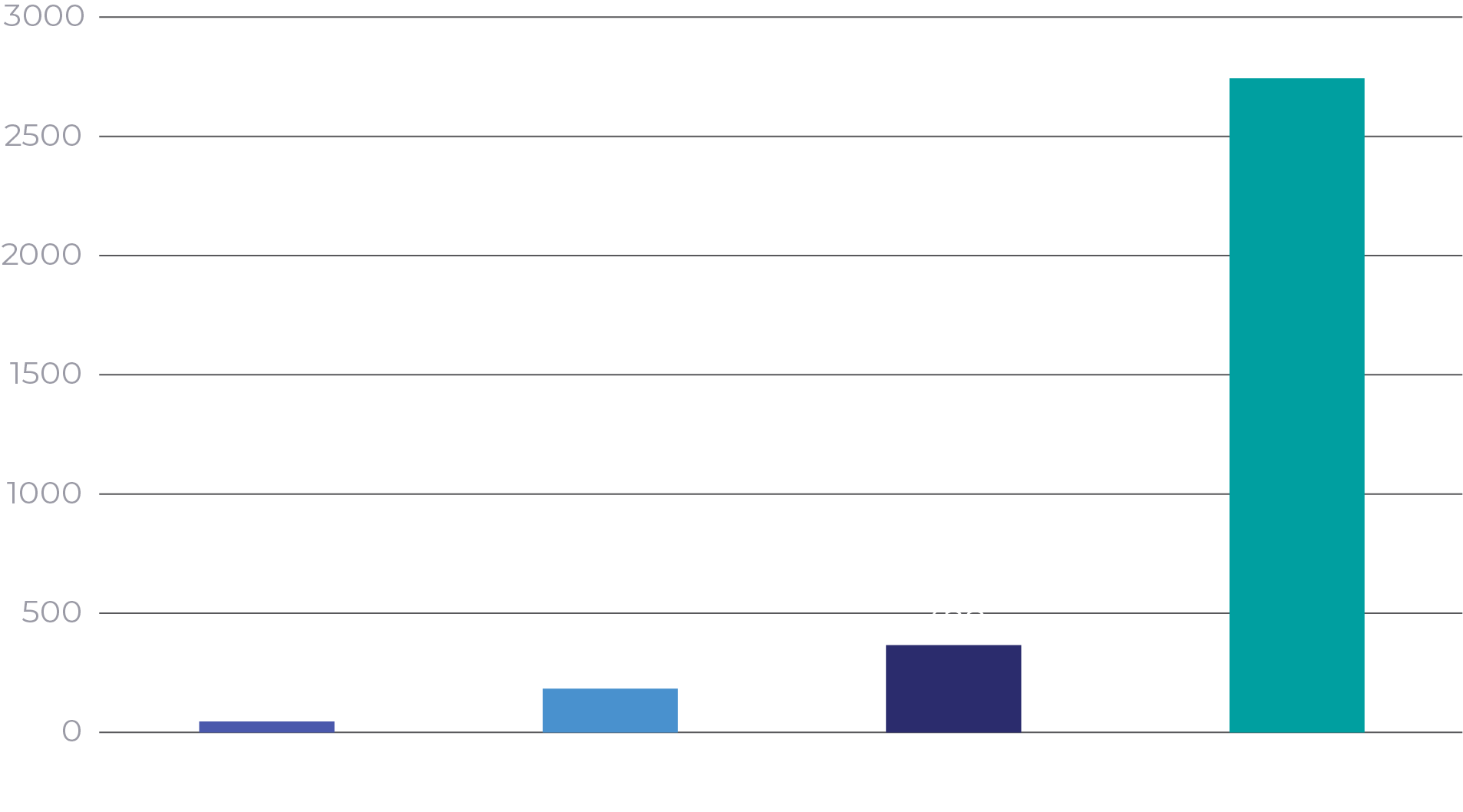

Ergo offers 20 to 100 times the power‑efficiency of alternatives, running inferences on 30 fps video feeds in as little as 9 mW of compute power. This means your device could provide unparalleled battery life and produce considerably less heat, enabling smaller and more versatile product packaging.

ResNet‑50 Power Efficiency (IPS/W)

Keep processing (and user data) local

Local processing makes devices that respond faster and protect their users’ privacy. Reducing or eliminating the need to send data to the cloud for inference reduces latency, improves power‑efficiency, saves cloud costs and minimizes the opportunity for sensitive data to be intercepted.

Ergo makes all this possible without trading away device features or performance, and further enhances on‑device security and privacy by providing encryption that protects the neural networks, software, and boot access to the chip.

Start now

Ergo is production‑ready, supported by a full suite of development tools to ensure successful integration into your device.

Download the Product OverviewNVIDIA Jetson Xavier NX: https://developer.nvidia.com/embedded/jetson-benchmarks (Feb 27, 2022)

Qualcomm Snapdragon 888: https://www.qualcomm.com/content/dam/qcomm-martech/dm-assets/documents/tech_summit_2020-ai_-_jeff.pdf

Hailo Hailo-8: https://hailo.ai/developer-zone/benchmarks/