By Jim McGregor 2020-04-05

Source: https://www.forbes.com/sites/tiriasresearch/2020/04/06/perceive-exits-stealth-with-super-efficient-machine-learning-chip-for-smarter-devices/#3e06c7496d9c

The article has been updated to reflect the performance numbers in TOPS (integer instructions) rather than originally reported FLOPS (floating point instructions).

Perceive is a new fabless machine learning processor company that is being spun out of Xperi, taking Xperi’s CTO Steve Teig as the new company’s CEO. The company has exited stealth mode and introduced the Ergo processor, designed to bring inference to small devices, offering a level of performance previously possible only in powerful cloud-based inference processors. Ergo delivers over 4 TOPS (tera operations per second) peak performance at less than a tenth of a watt peak power. Efficiency is 55 TOPS/watt, a full order of magnitude better than existing inference processors.

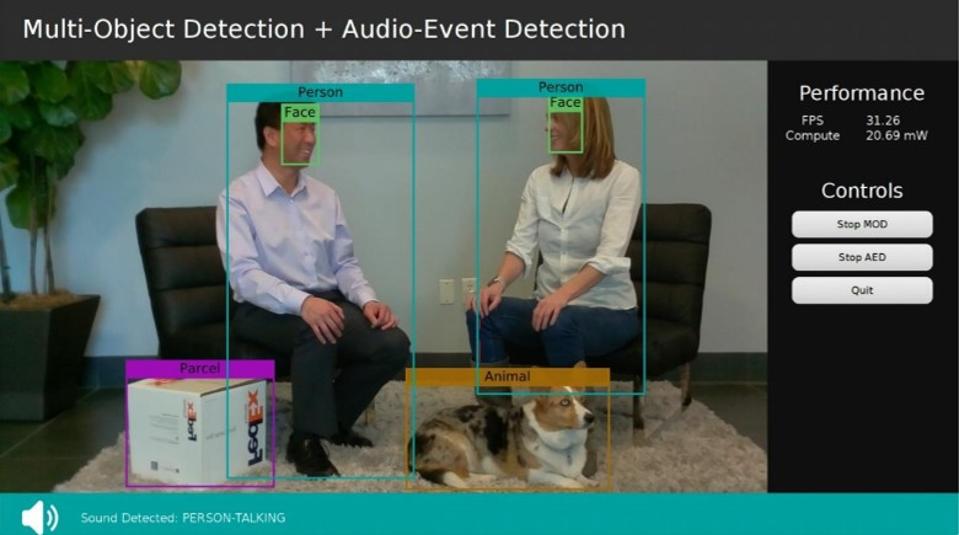

Running today’s advanced neural networks has been significantly beyond the reach of many small and battery-powered devices. Ergo is designed to run multiple concurrent networks in excess of 100 million weights and network size exceeding 400MB. Perceive has demonstrated complex scenarios including multiple neural networks running concurrently. In a multi-sensor demonstration, multiple objects were detected from an HD sensor using M2Det running at the same time as audio event detection. In a facial recognition demonstration, M2Det detected objects while a second network runs facial feature detection and a third network runs facial recognition. Recommended For You

“The ability to run multiple, state of the art networks concurrently is a breakthrough for small devices and surprising in a chip that consumes only a tenth of a watt,” said Simon Solotko, Senior Analyst at Tirias Research. “Ergo is the first processor to place best-in-class machine learning research within the reach of smart devices. Processing sensor data offline will completely transform the architecture of cloud-based machine learning services and open significant opportunities for the consumer electronics industry.”

Ergo offers tight integration including on board I/O, memory, and inference processing making the chip ideal for emerging “smarter” devices which do not rely on cloud inference for sensor processing. Keeping sensor data off the cloud is inherently more secure – no one wants our full speech, home video feeds, and their return derived metadata on the internet. Sensors data can be routed directly to the machine learning processor which can immediately activate additional sensors or power up a wireless network.

Reducing power consumption is critical to enabling battery powered devices which don’t rely on home wiring, and also to enable lightweight devices suitable for wearables and portable electronics. Power savings will come from tasking the cloud less – eliminating false positives and processing raw sensor feeds locally – and from utilizing machine learning as a front line of logic that gates turning on other sensors or creating CPU processes. The power savings will enable new classes of devices with new feature sets.

“Local inference will be the next stage of evolution for the Internet of Things and consumer electronics,” continued Simon. “Smarter devices that employ advanced inference capabilities and lower power should create the innovation necessary to attract users and reassert the role of the consumer electronics device.”

The ability to run multiple, state of the art networks concurrently is a breakthrough for small devices. Creating a new design methodology which places inference at the center of device functions should drive device innovation and a new wave of utility for an Internet of smarter things. More detail on the new processor and the demonstrations are available in a free white paper.